The Mars 2020 Perseverance Rover aims to explore the surface of Mars to analyze its habitability, seek biosignatures of past life, and obtain and cache rock and regolith samples (the outer rocky material of the bedrock). This article describes tools designed to aid with the processing of data to and from the Rover. The processed data provides a plethora of scientific insight into the Rover’s Sampling and Caching Subsystem (SCS) health and performance. Additionally, these tools allow for the identification of important trends and help to ensure that the commands sent to the Rover have been reviewed, approved, and accounted for. Overall, these tools aid the Mars 2020 mission to seek biosignatures of past life by helping engineers better understand the Rover’s operations as it caches rock and regolith samples on Mars.

Author: Annabel R. Gomez

California Institute of Technology

Mentors: Kyle Kaplan, Julie Townsend

Jet Propulsion Laboratory, California Institute of Technology

Editor: Audrey DeVault

Abstract

This paper details a few of the many Python-based Sampling and Caching (SNC) tools developed to aid in the processing of the uplink and down link data to and from the Mars 2020 Perseverance Rover. To specify, uplink refers to the data sent to the Rover and downlink refers to the data sent back from the Rover. The data processed provides a plethora of scientific insight to ensure the health and performance of the Rover’s Sampling and Caching Subsystem (SCS). One of the problems with dealing with such data, however, is that it can be difficult to immediately identify important trends. Thus, I worked to help develop three separate tool tickets. The first ticket identifies and records each unique Rover motor motion event and its respective characteristics. The second ticket helps to make the process of storing Engineering Health and Accountability logs more efficient. Finally, the third ticket, unlike the first two, sorts through the data that is sent to the Rover instead of the data that is sent from the Rover. More specifically, it helps to ensure that all the commands sent to the Rover have been properly reviewed, approved, and accounted for.

Introduction

The Mars 2020 mission aims to explore the surface of Mars using the Perseverance Rover. In July of 2020, Perseverance was sent to Jezero Crater to analyze its habitability, seek biosignatures of past life, and obtain and cache rock and regolith samples, the outer rocky material of the bedrock. The Rover landed on Mars in February of 2021.

One of the main systems that will carry out these critical tasks is the SCS designed to collect and cache rock core and regolith samples and prepare abraded rock surfaces. To abrade a rock surface simply means to scrape away or remove rock material. The overall SCS is composed of two Robotic Systems that work together to perform the sampling and caching functions. One of the systems is a Robotic Arm and Turret on the outside of the Rover and the second system is an Adaptive Caching Assembly on the inside of Perseverance [1, 2].

The SNC team is responsible for performing tactical downlink assessments to monitor the SCS’s health and performance. In addition, they oversee the initiation of strategic analyses and long-term trending to assess the subsystem’s execution across multiple sols, or days on Mars (1 sol is about 24 hours and 39 minutes).

On any given sol, there is a plethora of data generated by the SCS. This data is then collated into informative reports that are used to complete each sol’s tactical downlink analysis. SNC engineers then assess these reports on downlink and report on the status of the subsystem. New tools have been developed to further analyze and dissect this data, both by identifying motion events and processing data into summary products stored on the cloud and to help ensure that only approved and tested sequences and commands are sent to the Rover.

Objectives

During downlink from the Rover, it is often difficult to identify unique data trends immediately. Thus, one of the main goals of this research is to establish a sampling-focused trending dashboard infrastructure containing plots of motor data from specific activities over the course of the Mars 2020 mission. This facilitates the identification of trends that can be compared to the on-hand data collected from testbed operations back on Earth and/or create new plots and tables to gain a better understanding of the Rover and its behavior. Identifying such trends will pinpoint potential issues and will allow for changes and/or corrections to the SCS’s operations where necessary.

SNC accomplishes these long-term objectives by creating a trending web dashboard filled with plots and other metrics that can be easily used by others working on the project. To establish a baseline for how the SCS should behave during any given activity, multiple instances of the same activity must be collected and compared over time. Prioritized motions and metrics, identified through coordination with Systems Engineers from each of the SCS domains, are stored over the course of the mission and populated on the dashboard.

One challenge, however, is stale data, or data that is not bring processed or updated in a timely manner. In a large mission, such a Mars 2020, it is important that data is received and organized quickly so that other teams can complete their respective tasks. Additionally, it is important that long processing times for users are avoided so that data for spacecraft planning can be assessed in a timely manner [2]. This means that data needs to be preprocessed, put in the desired format, and stored so that it can be accessed locally by the dashboard as needed. Once preprocessed, data needs to be updated when new data is downlinked. To combat this challenge, I worked to find a solution that queries and store the data on a regular basis.

The principal tasks of my research include architecting and developing Python-based tools, or algorithms, for the SNC downlink process. This begins with the receipt of data from the vehicle and continues through the tactical downlink analysis, where it is decided if SNC is GO/NO-GO for the sol’s uplink planning, and ends with updates to any strategic products for analyses. More specifically, there are three focal tools needed to make the trending succeed: 1. A tool to identify new data once available. 2. A tool to preprocess and store new data for trending. 3. A tool to update trending dashboard plots with the latest preprocessed data. With these tools in place, displayed trends in the Rover’s operations will help indicate how the SNC operations should proceed for the sol. This paper covers three specific tickets related to this overall development effort.

Ticket 1: Motion Events

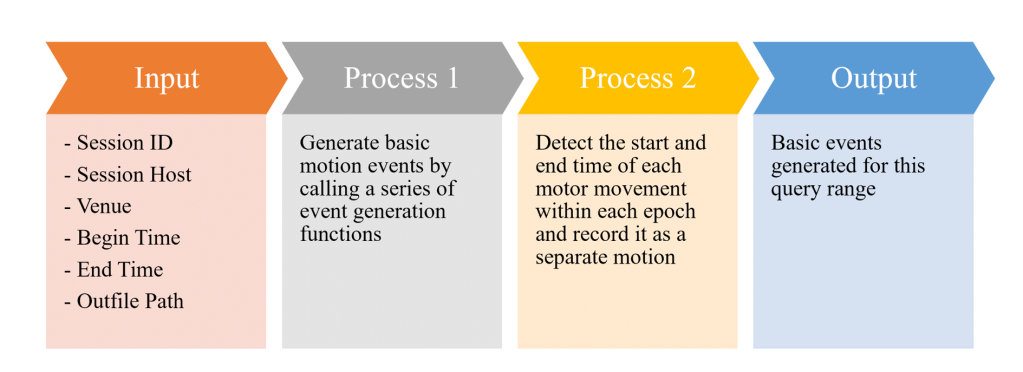

Many of the tickets, or projects, that I worked on this summer focus on the second goal mentioned above: develop a tool to process and store new data for trending. This first ticket contributes to a program that further analyzes and dissects Rover motion events to better identify their characteristics, each representing a unique motion request by a single motor. Previously, motion events were recorded during a specified epoch, however, the method for determining that epoch led to inconsistent results. The additions I made to this motion detection program now allow it to detect the start and end time of each motor movement within an epoch and properly record it as a separate motion, as is described in Figure 3. It is important that we collect and filter each starting motion by identifying all the mode Event Verification Records (EVRs), a print-statement style telemetry, that correspond to the start of the motion. Using this tool, once the begin and end times of each motion event are identified, subsequent tools can extract a great deal of mechanism data from the specified time period and generate needed statistics and plots. The main learning curve with this ticket was getting used to the Jupyter Notebook and GitHub environment as well as learning how to program collaboratively to build off and/or debug someone else’s code.

Ticket 2: EHA Logs

The second ticket I worked on adds to and edits a previously existing program designed to store Engineering Health and Accountability (EHA) logs with each downlink pass and store them on the Operational Cloud Storage (OCS) sol by sol. To specify, EHA logs are essentially large CSV files with each column representing a timestamp or a different EHA channel’s value over a given time period. Essentially, EHA is a channelized telemetry collected aboard the Rover and processed on the ground; each channel represents a different type of data and is recorded at a specified rate. Additionally, the OCS is a hosted cloud storage location where operations data is stored and maintained.

The storage of the EHA logs is useful for JMP-based analyses of flight data and serves as a steppingstone to generating summary CSV files from flight data. JMP is a program with a GUI used for data analysis and visualization; most often used to analyze sampling data. The main challenge with this ticket was that there was too much data to collect and load at once. As a result, the program kept crashing since each query request exceeded Python’s memory limit. To combat this, I developed a function that takes the desired time range of data and breaks it up into shorter time segments so that smaller sections of data are written to the CSV file in the correct order, as is described in Figure 4. In general, preprocessing the data in this form helps visualize the system state and is also helpful for other tools to analyze flight data in a compatible format.

Ticket 3: Sequence Tracking Tools

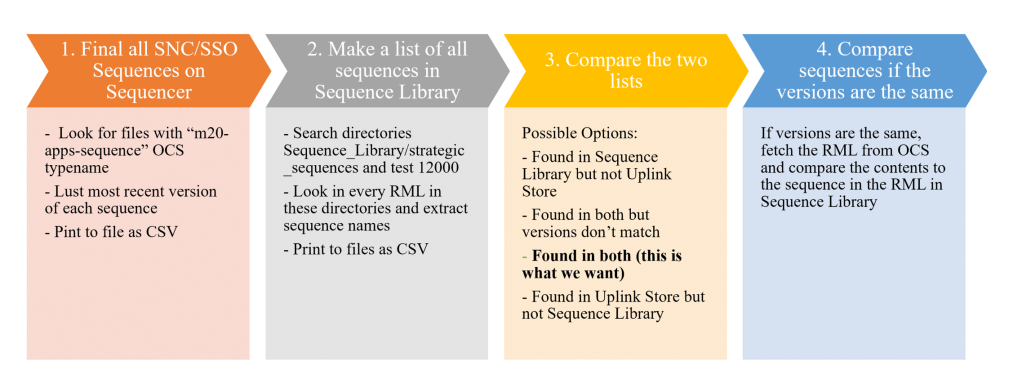

The first two tickets are on the downlink side of the SNC operations, processing and analyzing data sent back from the Rover. To gain further experience and knowledge in the many sampling and caching tools the SNC team develops, my next ticket was aids uplink operations: processing data before it is sent to the Rover. When it comes to sending commands to the Rover, there are many sequences written and all their versions must be maintained and kept track of. There are two places where sequences are stored: the uplink store and sequence library. The sequence library is where requests are stored, such as robotic commands for sampling. Once the sequences have been tested and approved, they are sent to the uplink store where they are then delivered to the Rover. Specifically, in this ticket, I helped make progress towards ensuring that all of the commands that are sent to the Rover have been properly reviewed, approved, and accounted for. As seen in Figure 5, there are several steps to complete for this task. To start, I developed a program that obtains a list of the sequences merged into the library along with their version information and specifications. Then, I developed another program that pulls a sequence list down from the uplink store to get a list of what has been sent there. In the upcoming steps (3 and 4), a cross reference will be created to be used as a check to compare the two lists and compare sequences if the versions are the same. This ensures the sequences sent to the rover match those version-controlled in the Sequence Library.

Conclusion

This research helped to establish an efficient sampling-focused trending dashboard infrastructure and improved the ability to identify trends in Rover data from specific activities over the course of the Mars 2020 mission. I developed and improved upon three main Python-based tickets involved in the uplink and downlink of Rover data. The first ticket identifies and records each individual, unique Rover motor motion event and its respective characteristics, such as the start and end times. The second ticket helps to make the process of storing EHA logs more time efficient to better visualize the system state. Finally, the third ticket, unlike the first two, works with the data that is sent to the Rover instead of from the Rover. More specifically, it works to ensure that all of the commands that sent to the Rover have been properly reviewed, approved, and accounted for. Overall, the tickets I worked on will continue to aid the Mars 2020 mission of seeking biosignatures of past life by helping SNC engineers better understand the Rover’s operations as it caches rock and regolith samples on Mars.

References

- Anderson, R.C.., et al. “The Sampling and Caching Subsystem (SCS) for the Scientific Exploration of Jezero Crater by the Mars 2020 Perseverance Rover.” Space Science Reviews, Springer Netherlands, 1 Jan. 1970, link.springer.com/article/10.1007/s11214-020-00783-7.

- A.C.. Allwood, M.R.. Walter, et al. “Mars 2020 Mission Overview.” Space Science Reviews, Springer Netherlands, 1 Jan. 1970, link.springer.com/article/10.1007/s11214-020-00762-y.

- “New Tools to Automatically Generate Derived Products upon Downlink Passes for Mars Science Laboratory Operations.” IEEE Xplore, ieeexplore.ieee.org/document/9172647.

Acknowledgments

I would like to thank my incredible mentors Kyle Kaplan and Julie Townsend as well as Sawyer Brook for providing research guidance, support, and technical assistance; Michael Lashore for taking the time to allow me to shadow his SNC downlink shift; Frank Hartman for finding and funding this opportunity; and Caltech Northern California Associates and Mary and Sam Vodopia for the fellowship to participate in Caltech’s Summer Undergraduate Research Fellowship (SURF).

Further Reading

The Sampling and Caching Subsystem (SCS) for Scientific Exploration of Jezero Crater by the Mars 2020 Perseverance Rover

Mars 2020 Mission Overview

The research was carried out at the Jet Propulsion Laboratory, California Institute of Technology, and was sponsored by the SURF internship program and the National Aeronautics and Space Administration (80NM0018D0004).

© 2021. California Institute of Technology. Government sponsorship acknowledged.